AI assistants are now embedded in most mainstream development workflows, but how developers use them is more conservative than the product demos suggest. A consistent pattern shows up across major developer surveys and platform telemetry: developers are more comfortable letting AI modify existing code than asking it to invent new logic from scratch.

That preference isn’t just habit. It reflects how engineers evaluate risk, how software correctness is proven, and where “good enough” is acceptable.

What the reports show

1) Usage is widespread, but trust is not.

In the 2025 Stack Overflow Developer Survey’s AI section, respondents report heavy usage—especially among professional developers who say they use AI tools daily or weekly—while simultaneously expressing low confidence in accuracy. The survey reports that more developers distrust AI output than trust it (46% vs. 33%), and only a small minority “highly trust” results. [Stack Overflow]

That tension (high usage, low trust) is exactly where refactoring becomes the “safe” workload: you can accept help while staying skeptical.

2) Developers want AI for the mechanical parts of the job, not the core reasoning.

JetBrains’ 2025 ecosystem reporting emphasizes that developers are happy to delegate repetitive, low-creativity tasks (boilerplate, documentation, summarizing changes) and prefer to remain in control of complex, higher-risk work—explicitly including debugging and designing application logic.

This aligns neatly with real-world AI behavior: it’s often strong at pattern-based transformations, weaker at domain-specific intent.

3) The tools themselves are shifting toward “maintenance work.”

On the GitHub side, Octoverse signals how central AI has become—e.g., it reports that a large share of new developers adopt Copilot very quickly—and it highlights momentum around “agent” features like automated code review in 2025. [The GitHub Blog] That’s not just about producing new code; it’s about improving and validating existing code. GitHub’s changelog for Copilot code review frames the feature as offloading basic reviews to surface bugs and performance issues and even suggest fixes—again, a maintenance and quality posture rather than greenfield creation.

Taken together, the reported trend is: broad adoption, persistent skepticism, and product investment in workflows that touch existing code and quality gates.

Why this pattern makes sense (interpretation)

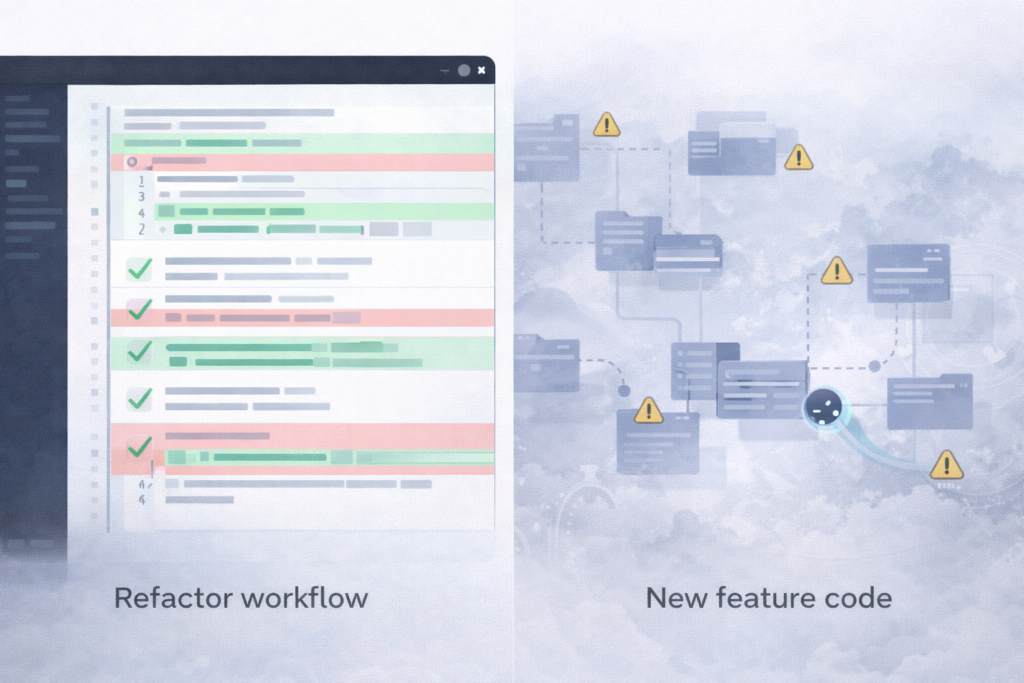

The gap between “writing new code” and “refactoring” is basically the gap between unknown correctness and bounded correctness.

Refactoring has built-in guardrails

Refactoring is usually constrained by invariants: behavior should remain the same, tests should pass, types should still check, performance shouldn’t regress. That makes it easier to trust AI conditionally.

Developers can ask for:

- “Extract this method and reduce nesting”

- “Replace this callback chain with coroutines/async”

- “Rename these symbols for clarity”

- “Remove duplication across these two classes”

- “Migrate deprecated API calls to the new API”

Even if the AI isn’t perfect, the outcome is often a diff you can review quickly—then validate with compilation and tests. If it fails, the failure is usually local and diagnosable.

New logic is where mistakes are expensive and subtle

When you ask an AI to write new features, you’re asking it to infer product intent, edge cases, and non-obvious constraints—things that often aren’t present in the code at all.

Examples:

- authorization rules that depend on business policy

- tricky concurrency invariants

- performance budgets in hot paths

- “this must never happen” states enforced by organizational convention

- security implications and data handling requirements

This is exactly where survey respondents report lower trust in AI handling complex tasks. In the Stack Overflow 2025 survey, a meaningful share of developers rate AI tools as bad/very poor at complex tasks, and many avoid using AI for those tasks altogether.

Maintenance work benefits from “good suggestions”, not perfect answers

A lot of refactoring and cleanup work is pattern recognition:

- normalize error handling

- improve nullability/type usage

- apply lint-driven transformations

- convert repetitive code into a shared helper

- modernize language constructs

AI is strong at seeing and applying patterns across files—especially when you can point it at a concrete example (“make the rest look like this”) and then evaluate the patch.

Test generation and legacy-code explanation are “adjacent trust” tasks

Developers often trust AI for tasks that support correctness rather than define it.

Test generation: AI can propose unit tests, edge cases, and fixtures quickly. You still own whether the assertions reflect the intended behavior, but the busywork shrinks. If the tests are wrong, they often fail fast or read as obviously mismatched during review.

Explaining legacy code: Asking “what does this function do?” or “why is this state machine structured like this?” is lower risk than “add a new state.” Even when explanations aren’t perfect, they can accelerate onboarding and guide where to look next—without being the final authority.

The practical takeaway

What’s changing isn’t that developers suddenly “trust AI.” It’s that they’re learning where AI is reliably useful under scrutiny: producing diffs that are reviewable, reversible, and testable.

Refactoring, cleanup, test scaffolding, and legacy-code explanation fit that profile. New feature logic often doesn’t—at least not without tighter specs, stronger verification, and the kind of accountability that surveys suggest developers still reserve for themselves.